How Healthy Friction Between Agents Catches Real Bugs

Multi-agent systems work best when agents disagree. Not randomly, not destructively, but through structured friction where incompatible mandates force each agent to see what the other cannot. This is not a metaphor about collaboration. It is a concrete engineering pattern with measurable results, and the bugs it catches are ones that no amount of single-agent sophistication will find.

The intuition is simple. A developer agent that writes code and then reviews its own work will confirm its own reasoning. The same mental model that produced the implementation will evaluate it, and that model has blind spots baked in. Daniel Kahneman's work on cognitive bias established decades ago that humans reliably fail to challenge their own conclusions. The same failure mode applies to LLM agents: the context window that generated the code is the worst possible context window for finding its flaws.

Split the work across two agents with different mandates, and the failure mode disappears.

The Seam Problem

The most valuable bugs do not live inside functions. They live at seams: the boundary between what one agent validates locally and what the project enforces globally. A developer agent runs a test file. Tests pass. The agent signals success. But the project's pyproject.toml specifies a coverage threshold that applies across the entire test suite, and the new test file dilutes overall coverage below that threshold. The developer agent never checks global coverage because its mandate is local correctness. The QA agent, whose mandate is project-wide verification, catches it immediately.

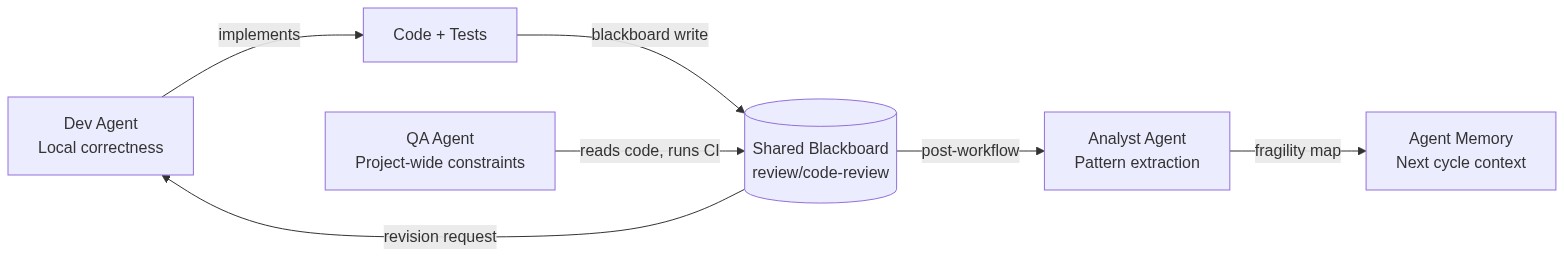

This is not a hypothetical class of bug. In a recent orchestration run, a QA agent posted a structured revision request to the shared blackboard: seven uncovered lines in runner.py's main function. Unary extra-argument error paths. Ternary clamp branches. The __main__ block. Every one of those gaps was invisible to the developer agent that wrote the surrounding tests. The developer agent had verified that its tests passed. It had not verified that its tests were sufficient.

The QA agent sent the revision request. The developer agent received it, read the blackboard findings, fixed the implementation, re-ran tests, and signaled completion. Two message exchanges, clean resolution. The whole cycle took minutes. Without the friction, those seven coverage gaps would have shipped silently.

Three Structural Properties

Productive friction is not just "have two agents look at the code." Most multi-agent review setups produce noise, not signal. The difference between useful disagreement and expensive theater comes down to three structural properties.

Narrow, non-overlapping mandates. The developer agent's job is to implement the function and write tests that exercise its behavior. The QA agent's job is to verify that the implementation satisfies project-wide constraints: coverage thresholds, linting rules, CI configuration, type safety. These mandates must not overlap. When both agents check the same thing, you get redundancy without diversity. When each agent owns a distinct verification surface, disagreements point at real gaps.

Structured communication with specific references. "This code has problems" is useless feedback. "Line 213 of core.py: separator parameter is keyword-only, causing repeat('abc', 3, '-') to fail" is actionable. The communication channel between agents must enforce specificity. In practice, this means agents write structured findings to a shared blackboard with file paths, line numbers, and reproduction steps. The developer agent reads those findings and knows exactly what to fix. No ambiguity, no interpretation overhead.

An independent verification gate. After the developer revises and the QA agent re-reviews, a third check confirms that the resolution actually holds. This prevents the social dynamic where the reviewer accepts a fix out of fatigue rather than verification. In automated systems, this gate runs the full test suite, checks coverage, and verifies that no new issues were introduced by the fix. The gate has no opinion about the code. It only has facts.

When all three properties hold, the friction loop converges. When any one is missing, it degrades into either rubber-stamping or infinite revision cycles.

Emergent Behaviors Worth Keeping

The most surprising outcome of structured agent friction is what happens at the edges of the designed system. In one orchestration run, while the QA agent was still writing its review amendments, the developer agent proactively ran conflict pre-checks against the main branch. Nobody programmed that coordination. It emerged from the developer agent having enough context about the QA agent's likely findings to anticipate what verification would be needed next.

Similarly, a passive observer role — originally designed to record what happened during dev-QA exchanges — evolved into an active synthesizer. It started generating action items from the patterns of disagreement it observed. When QA repeatedly flagged coverage gaps in the same module, the observer identified that module as architecturally fragile and recommended targeted refactoring. The observer's mandate was narrow (watch and record), but the accumulated context from multiple friction cycles gave it enough signal to produce original analysis.

This mirrors a principle from W. Ross Ashby's cybernetics work: the variety of a control system must match the variety of the system it regulates. A single agent reviewing its own code has low variety. Two agents with incompatible mandates have higher variety. An observer watching the pattern of disagreements between those two agents has variety at a different level entirely, one that can detect structural weaknesses invisible to either participant.

When Friction Costs More Than It Catches

Not every change needs two agents arguing about it.

A single-line typo fix does not benefit from a QA agent running 67 shell commands and 15 heartbeat calls over 11 minutes. The coordination overhead — agent registration, context loading, message routing, blackboard reads and writes, signal acknowledgment — is real. In short workflows, that overhead can consume a meaningful percentage of the total time budget. For a function implementation that takes three minutes, spending another eight minutes on structured QA review is a net loss if the implementation is trivially correct.

The deciding factor is blast radius. Changes confined to a single file with no downstream dependencies are safe for single-agent execution. Changes that touch configuration, modify shared state, or cross module boundaries benefit from the full friction pipeline. The February sessions made this clear: utility function implementations (truncate, repeat, join) completed cleanly through dev-QA cycles, but the interesting bugs — coverage threshold failures, keyword-only parameter mismatches, scope creep in documentation changes — all lived at boundaries between components.

A reasonable heuristic: if the change touches files that other parts of the system depend on, route it through friction. If it's self-contained, let a single agent handle it.

The Blackboard as Institutional Memory

One pattern that stabilized across dozens of runs was the blackboard namespace convention. QA agents consistently wrote findings to review/code-review on the shared blackboard. Post-workflow analysts read from that namespace to extract learnings. This convention was never specified in any workflow definition. It emerged from agents independently choosing a reasonable namespace, and it persisted because it worked.

The blackboard serves a dual purpose. In the short term, it is the communication channel that makes structured friction possible. The QA agent writes specific findings, the developer agent reads them, revisions target exactly what was flagged. In the long term, the accumulated blackboard entries become a record of where disagreements happen most often. Modules that generate repeated QA findings are modules with architectural problems. The friction does not just catch individual bugs. Over time, it produces a map of codebase fragility.

Why This Matters Beyond Agents

The pattern generalizes. Any system where the author and reviewer share the same mental model will systematically miss the same class of bugs. Code review processes that pair developers from the same team, working on the same feature, with the same assumptions about how the system behaves, will confirm each other's reasoning rather than challenging it. The structural fix is mandate divergence: ensure the reviewer is looking for something fundamentally different from what the author was optimizing for.

In human teams, this is hard to enforce socially. People default to agreement. In agent systems, it is a configuration choice. You define the QA agent's mandate as "verify project-wide constraints" and the developer agent's mandate as "implement correct local behavior," and the system produces friction automatically. The disagreements are not personal. They are structural. And structural disagreements, when channeled through specific, actionable communication, catch bugs that no other mechanism will find.

The lesson is not "use more agents." The lesson is that verification quality depends on the divergence between the verifier's perspective and the author's perspective. Maximize that divergence, give it a structured channel to express itself, and bugs that were previously invisible become obvious. The friction is the feature.