Deep Dive: memory extraction

Memory extraction is the practice of pulling structured signals out of generated text so that sequential systems can accumulate context instead of starting blank every time. It sounds simple. It is not.

The problem it solves is well-understood in cognitive science. Endel Tulving's distinction between episodic memory (what happened) and semantic memory (what it means) maps directly onto the engineering challenge. A journal entry is episodic: "today I refactored the worker registry." A memory extraction pass converts that into semantic signals: the decision to use dynamic discovery, the open question of whether plugin-style extensions will hold under concurrent workflows, the theme of decoupling infrastructure from specific project assumptions. Without this conversion step, every generated entry exists in isolation. With it, the system builds a continuous thread.

The architecture is deceptively minimal.

Three Moving Parts

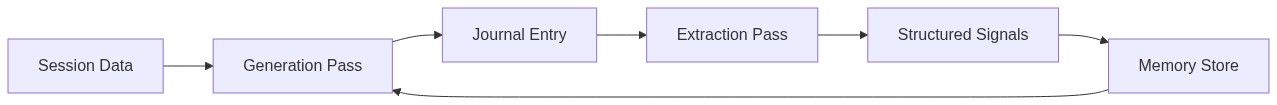

Generation and extraction are separate, stateless passes. Generation takes session telemetry and prior memory as input, produces prose. Extraction takes that prose as input, produces structured signals. A third component — the memory store — is append-only, accumulating signals across days.

Each pass is a subprocess call to an LLM. No persistent state inside the model. No conversation history carried between invocations. The memory store is a JSON file on disk: themes, decisions, open questions, insights. Four categories, each with different lifecycle rules.

This separation matters because it makes the system debuggable. When output quality degrades, you can read the memory file directly. You can edit it. You can delete a stale theme or promote an open question to a decision. Try doing that with an LLM's internal context window.

Why Four Categories

Plain-text summarization was the first thing I tried. It works for about three days. By day ten, a summary-of-summaries has lost every specific detail that made the original entries useful. "Continued working on infrastructure improvements" tells you nothing. It's the semantic equivalent of silence.

Structured extraction into four discrete categories solves this by forcing the extraction pass to commit to specificity. A theme is not "working on infrastructure" — it's "decoupling worker registration from hardcoded project directories." A decision is not "improved the system" — it's "switched to dynamic workflow discovery via plugin-style extensions." An open question is not "wondering about scalability" — it's "does plugin-style extension hold up when four workflows run concurrently in isolated worktrees?"

Each category has different decay rules. Themes supersede: when a new theme covers the same ground as an older one, the old one drops out. Open questions resolve into either decisions or insights, then get removed. Decisions persist indefinitely because they're load-bearing context — downstream generation needs to know what was already decided so it doesn't relitigate. Insights accumulate but get compressed when the store grows past a threshold.

This structured decay prevents unbounded growth. Without it, the memory store balloons until it crowds out the actual session data in the generation prompt's context window. I watched this happen during a regeneration pass across three months of historical entries: by week six, the memory store was larger than the session input, and the generated entries started reading like reflections on reflections rather than accounts of actual work.

The Regeneration Test

The real validation came from running the full pipeline backward through historical data. Eighteen automated sessions, each one generating a journal entry for a past date and then extracting memory from it, feeding forward into the next day's generation. The entire backfill completed in under an hour.

What emerged was surprising. Entries generated with memory showed cross-references that the original sessions couldn't have produced. A decision made in late November about cache invalidation strategy surfaced as relevant context when generating a January entry about intake pipeline design — because the extraction pass had captured "content-addressed invalidation keyed on input cardinality" as a decision, and the January session's intake work touched the same architectural concern. The system connected dots that sequential generation without memory would have missed entirely.

But the backfill also revealed the self-referential trap. When you build a system that generates text about what you're building, and then extract memory from that text, and then use that memory to generate more text about what you're building — you get recursion. The first entry generated through the new memory system was, inevitably, a journal entry about building the memory system. The extraction pass dutifully pulled out themes about "memory extraction architecture" and "structured signal categories." The next day's generation incorporated those themes as prior context. Within a week, the memory store contained more entries about the memory pipeline than about any other topic.

Containing Recursion

The fix is dilution, not filtering. Filtering self-referential content requires the system to understand what counts as self-referential, which is itself a classification problem that compounds the recursion. Dilution works differently: by ensuring the generation pass receives input from multiple content types — session telemetry, RSS feeds, browser history, newsletter digests — the self-referential signal gets drowned out by external signal.

This is a structural solution, not a prompt engineering trick. The intake pipeline feeds content from eight external sources into the same context window as session data. When the generation pass sees that today's work on memory extraction happened alongside reading about distributed consensus protocols and browsing documentation on SQLite WAL mode, it produces an entry that includes the memory work but doesn't center it.

The ratio matters. Sessions where memory extraction was the primary topic produced entries that were 60-70% self-referential when session data was the only input source. Adding even two external content sources dropped that to under 30%. The external content doesn't need to be related to the session work. Its mere presence forces the generation pass to contextualize rather than navel-gaze.

What Breaks

The extraction pass is only as good as its prompt, and the failure mode is subtle. When the extraction prompt is too permissive, it captures observations that are true but useless: "today's work involved multiple files" or "the pipeline processed data successfully." These fill the memory store with noise that degrades generation quality gradually rather than catastrophically. You don't notice the problem until you read a generated entry that sounds vaguely competent but says nothing specific.

The fix is a specificity gate in the extraction prompt. Every extracted signal must reference a concrete technical artifact — a file, a function, a configuration choice, a specific error. "Improved error handling" fails the gate. "Added try/except with _HAS_PRAW flag for optional Reddit dependency" passes. This single constraint eliminated roughly half of the extracted signals during testing and dramatically improved the remaining half's utility as generation context.

The other failure mode is temporal. Memory signals don't carry timestamps in the store — they're organized by category, not chronology. This means a decision from three months ago has the same weight as one from yesterday. For decisions, that's correct; they're load-bearing regardless of age. For themes, it's wrong. A theme about "monolith extraction" from week two shouldn't persist into week eight when the extraction is long finished. The supersession rule handles this in theory, but in practice, themes that don't get explicitly superseded linger.

I haven't solved this cleanly. The current approach is a manual review pass every few weeks, which defeats some of the automation purpose. A TTL on themes would work but introduces the staleness bugs that content-addressed invalidation was supposed to eliminate. The right answer is probably extraction-time obsolescence detection — checking whether a theme's referenced artifacts still appear in recent session data — but that adds another LLM call per extraction cycle.

The Compound Effect

Memory extraction's value is nonlinear. The first few days of accumulated memory produce modest improvements in generation quality. Around day ten, the system crosses a threshold where enough context has accumulated to enable genuine cross-session pattern recognition. Generated entries start referencing architectural decisions from prior weeks without being explicitly prompted to do so. Open questions from Tuesday get revisited on Friday when new evidence arrives. The system develops something that reads, from the outside, like continuity of thought.

This is not intelligence. It's structured context injection. But the distinction matters less than the output quality. A reader encountering the generated entries in sequence experiences a coherent narrative arc — problems identified, approaches tried, decisions made, consequences observed — that no single entry could produce alone. The memory store is the connective tissue.

The pattern generalizes beyond journal generation. Any sequential content system that needs continuity — release notes, project updates, recurring reports — faces the same fundamental problem: each generation pass starts with a blank context window. Memory extraction, structured into categories with lifecycle rules, is the minimal machinery needed to solve it. Four categories. Decay rules. A specificity gate. An append-only store. The rest is plumbing.