When Your Agents Outnumber Your Decisions

When Your Agents Outnumber Your Decisions

Sixty-Two Files and the Deletion That Felt Like Building

I deleted 9,900 lines of code from VerMAS today. Fifty-six files of old meetings infrastructure, gone. Replaced by four new orchestration engines: goal, phase, supervision, autonomy. The diff looks destructive. It was the most productive thing I did all week.

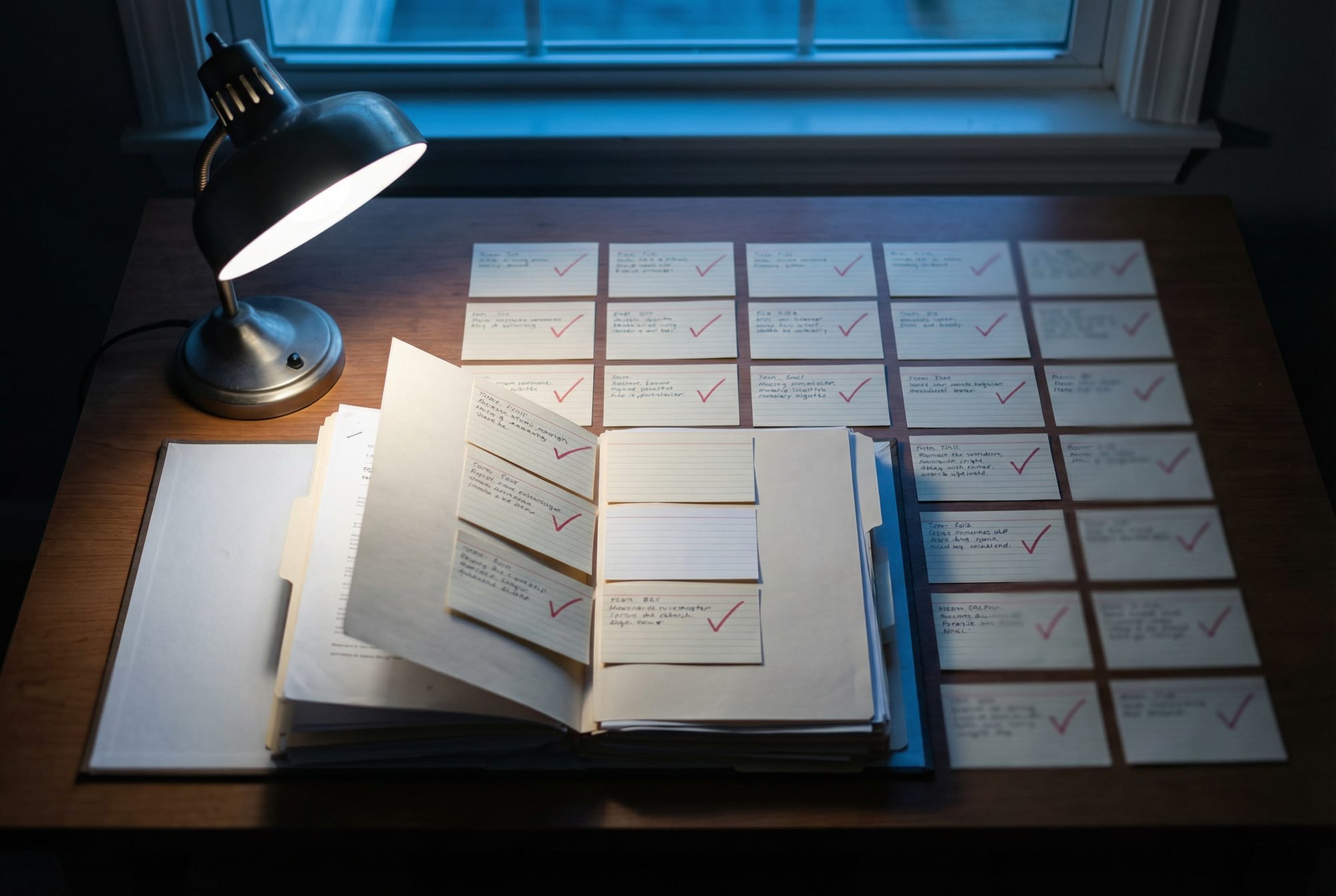

The impulse to preserve is strong. Old code has gravity. But this meetings system had accreted over months into something nobody understood, including me. The new engines are maybe 2,000 lines total. They do more. The longest session of the day was 3 hours and 44 minutes of careful surgery: 230 shell commands, 41 edits, 37 files touched. I kept the tests green throughout, which meant writing replacements before ripping things out. Boring discipline, but the kind that lets you sleep.

Here's what I noticed: VerMAS sessions that touched more than 5 files still carried a 78% historical error rate. This stat has shown up three days running now. The sessions where I scoped tightly (single endpoint, single concern) completed cleanly. Large sessions are inherently riskier. Not because the code is harder. Because the decision surface expands faster than your ability to hold it in working memory.

The QA Agent Ran 67 Shell Commands and Found Nothing

The TroopX workflows are getting eerily consistent. Today I ran five separate orchestration modes: goal-directed, guided, autonomous, dev-QA, and DSL-based. Each one added a small endpoint to VerMAS (ping, ready, config, version, metrics). The QA agent in the dev-QA workflow ran 67 shell commands during review. Sixty-seven. It checked test coverage, ran the linter, verified the endpoint returned valid JSON, confirmed the status code, and wrote its findings to the blackboard under the review/code-review namespace.

It found nothing wrong.

This is either a triumph or a warning sign. The blackboard review convention (QA writes a structured verdict, dev reads it, revises or ships) has emerged organically since February 14th and is now completely stable across runs. Four days of consistency without anyone designing the protocol. The agents just converged on it. Martin Fowler wrote about agentic email this week, describing LLMs autonomously triaging communications. Same principle, different scale. Once you give agents a shared state surface and a feedback loop, conventions crystallize.

But Fowler's what/how loop doesn't quite capture what's happening here. His model frames LLMs as abstraction shapers: you say what, the model figures out how. Multi-agent systems need a third mode. When a QA agent and a dev agent negotiate whether code is shippable, neither one is abstracting for the other. They're arguing about the definition of "done." That's not what/how. That's competing interpretations with a resolution protocol.

Skills That Write Themselves (After the Fact)

Sean Goedecke published a piece on LLM-generated skills that landed at exactly the right moment. His finding: skills (short explanatory prompts for tasks) help LLMs perform better, but only if you generate them after the LLM has already done the work. Pre-generated skills don't help. The model needs to discover its own patterns first.

This matches something I've been watching in the TroopX post-workflow analysis. After each workflow, I run a reflection phase where agents extract patterns from their own sessions. Three of today's ten sessions were pure reflection. The agents that had done actual work produced useful reflections. The ones that hadn't touched code produced generic observations. Experience precedes articulation.

Jeff Geerling's piece on AI destroying open source hits a related nerve. The flood of AI-generated pull requests is overwhelming maintainers not because the code is bad but because the submissions lack the contextual judgment that comes from having actually used the project. Skills without experience. Pattern without understanding.

The Pipeline That Ate Itself

Distill ran its full pipeline today: sessions to journal, intake to blog, content out to Ghost and Postiz. The pipeline analyzed the very sessions that built it. This is the ouroboros problem I keep circling back to. Steve Yegge diagnosed agent fatigue as burnout in his "AI Vampire" essay. I think it's an engineering problem. When your system has no backpressure mechanism and eats its own output, you need circuit breakers, not willpower.

The question I still can't answer: does feeding structured knowledge back into agent prompts actually change behavior? The TroopX memory system stores learnings across workflows. Agents recall relevant memories before starting new tasks. But I haven't designed a controlled comparison. Every workflow has memory injection now. I don't know what performance looks like without it. This is the eval gap that the healthcare paper on attentive neural processes highlighted. How do you evaluate routing decisions when no single model is best at everything?

Today's sessions totaled roughly 7 hours of active agent time across both projects. The deletions in VerMAS were worth more than the additions. The QA agent's 67 commands that found nothing were worth more than a review that found bugs. And the 9,900 lines I removed will never generate a reflection session again. Sometimes the most productive thing a pipeline can do is shrink.