Structured Extraction Is Not Summarization

Every recurring pipeline has a memory problem. Batch jobs, nightly builds, daily reports, CI runs: each invocation starts fresh, blind to what happened last time. The standard fix is to summarize previous runs and feed that summary forward. This is the wrong approach. Summarization destroys exactly the information that makes memory useful.

The distinction matters because LLM-powered pipelines are making this mistake at scale. A system that summarizes its own output before feeding it to the next run is building a game of telephone with itself. Each cycle smooths away the edges, the caveats, the half-formed threads that a future run could have picked up and advanced. What survives summarization is what was already obvious. What gets lost is what was interesting.

The Three-Step Loop

The pattern that actually works has three distinct phases: generate, extract, inject. You generate the output. A separate process extracts structured fields from it. Those fields get injected into the next run's context. Each phase is its own subprocess, its own prompt, its own debuggable unit.

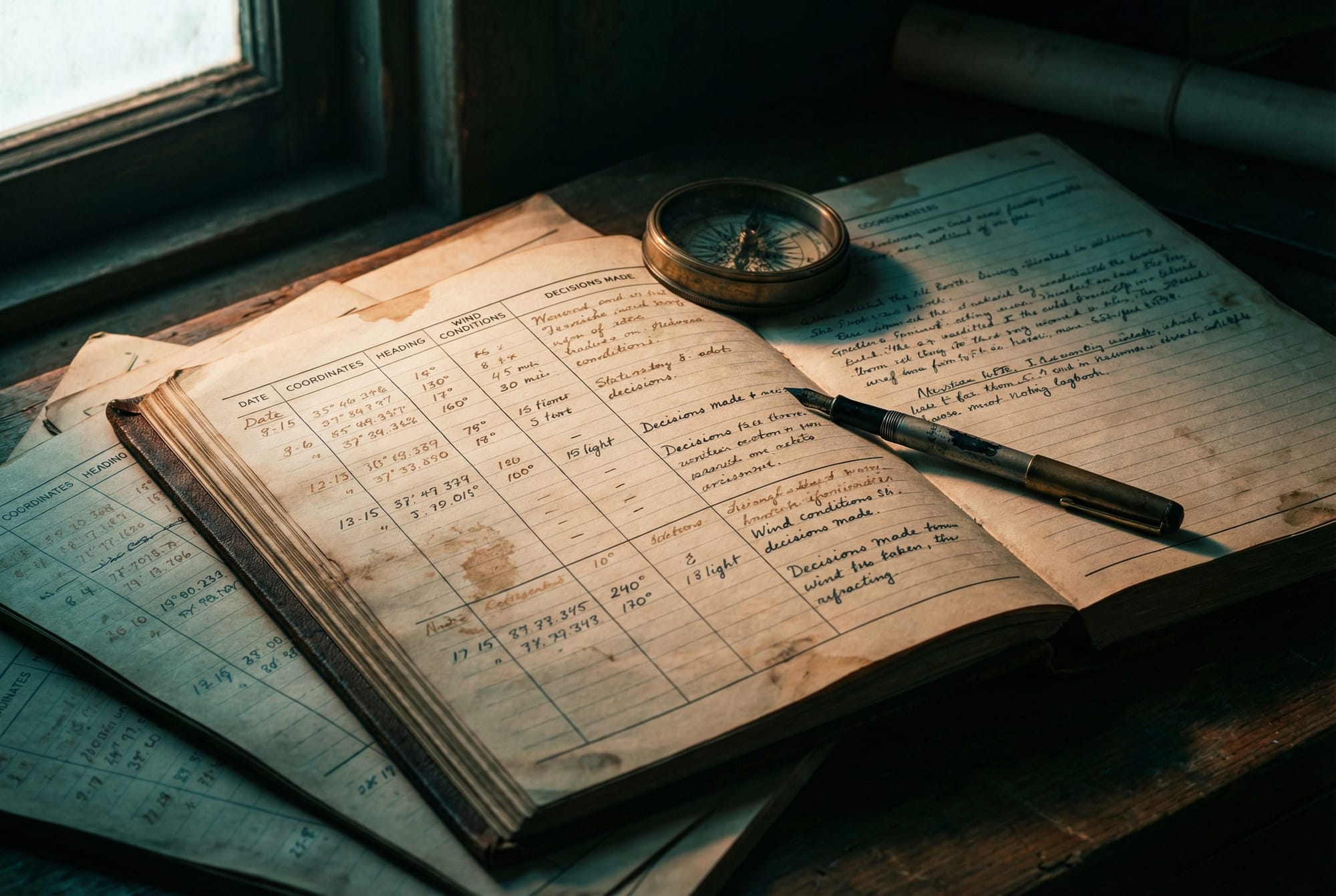

I built this for a journal generation pipeline that synthesizes daily entries from raw coding session data. The problem was continuity. Each day's entry was written in isolation, so Monday's entry might introduce a decision, and Wednesday's entry would introduce the same decision again as if it were new. There was no narrative thread.

The fix was not to summarize Monday's entry and paste it into Wednesday's prompt. The fix was to extract typed fields: themes as a list, decisions with their rationale, open questions that remain unresolved, and what was planned next. That structured context goes into the next day's prompt as explicit input, not as prose the model has to parse and interpret.

The difference in output quality was immediate. When I backfilled two months of historical entries with this loop running, the November entries (no prior context) read like status updates. By January, entries were referencing earlier decisions, advancing open questions, and building arguments across weeks. The memory didn't just add context. It gave the model something to push against.

Why Summaries Fail

Reid Hoffman and Sam Altman have both described the challenge of maintaining "institutional memory" in fast-moving organizations. The analogy to LLM pipelines is precise. When a company summarizes last quarter's learnings into a slide deck, the nuance evaporates. When a pipeline summarizes last run's output into a paragraph, the same thing happens.

Consider what a summary produces: "Explored storage options and selected a vector database." That sentence is technically accurate and functionally useless. It gives the next run nothing to work with. No decision to revisit, no constraint to respect, no thread to continue.

Now consider what structured extraction produces: a decision field containing "pgvector selected over FAISS because the workload requires SQL joins against metadata columns," paired with a revisit condition: "if query latency exceeds 50ms at the p95." That's a hook. The next run can reference the decision, test the revisit condition, or build on the rationale. The structured format preserves the connective tissue that summaries dissolve.

This is not a new insight in database design (event sourcing preserves full history while snapshots lose it) but it's underappreciated in LLM pipeline architecture. Most teams reaching for "memory" in their AI systems default to summarization because it feels natural. Summaries are what humans write. But the consumer of this memory is not a human skimming a report; it's a model that performs better with explicit, structured, parseable context.

Separation Saves You at 3 AM

Keeping extraction as a separate subprocess call rather than inlining it into the generation step costs latency. An extra LLM call per cycle. I kept it separate anyway, and the first debugging session vindicated that choice.

When a journal entry referenced a technical decision that was never actually made, I needed to answer two questions: did the generation step hallucinate it, or did the extraction step hallucinate it from a previous entry? With separate processes, I could inspect the generation output independently, then inspect the extraction output, and trace exactly where the bad context entered the chain. With an inlined approach, the generation and extraction are entangled in one prompt, and debugging becomes archaeology.

The fan-in/fan-out architecture reinforces this. Generation doesn't know about memory. Extraction doesn't know about journals. The orchestrator wires them together. Swap the extraction strategy without touching generation. Add new memory sources without changing the extraction format. The interfaces are clean because the processes are separate.

This mirrors what the distributed systems community learned decades ago about separating reads from writes in event-driven architectures: CQRS works not because it's theoretically elegant but because it makes failures legible. The same principle applies when your "writes" are LLM generations and your "reads" are structured extractions feeding the next cycle.

The Compounding Problem Nobody Warns You About

Here's what the pattern reveals that's harder to see from the outside: structured extraction compounds errors with the same efficiency it compounds improvements.

A typed decision field that says "we chose event-driven triggers for all agent coordination" sounds authoritative. If that decision was hallucinated during extraction, the next cycle treats it as ground truth. The cycle after that builds on it. By cycle four, you have a confidently stated architectural direction that nobody actually chose, load-bearing in the context of every subsequent run.

This is not a theoretical risk. I watched it happen during a multi-cycle pipeline run where an extraction attributed a decision to a session that never made it. Three cycles later, the pipeline was referencing that decision as established context, and the output read perfectly coherent. The error was invisible to automated checks because the format was correct. Only a human reading the chain spotted it.

The forcing function I landed on: the extraction output must be reviewed by a human before injection into the next cycle. If nobody reviews it, the cycle runs without memory (stateless degradation rather than contaminated state). This makes the cost of skipping review visible in the output quality rather than hidden in gradually drifting context.

When to Use This Pattern

The generate-extract-inject loop applies anywhere you have a recurring LLM pipeline that would benefit from cross-run continuity. Daily report generation. Weekly digest synthesis. Automated code review that should learn from previous reviews. Content pipelines that build narrative over time.

The implementation requirements are modest. You need typed extraction fields specific to your domain (not a generic "summary" field). You need the extraction as a separate call, not bundled into generation. And you need a human in the loop at a cadence faster than the rate at which errors compound.

The temptation will be to start with summarization because it's simpler. Resist it. The difference between "we discussed storage options" and a typed decision with rationale and revisit conditions is the difference between memory that decays and memory that compounds. Every pipeline that runs more than once deserves the version that compounds.